This article looks like it might end up being relatively important. They seem to have found a way to both avoid the need for tokenization in LLMs and also avoid the small context windows that result in LLMs short memories. Andrej Karpathy has a post going into more detail.

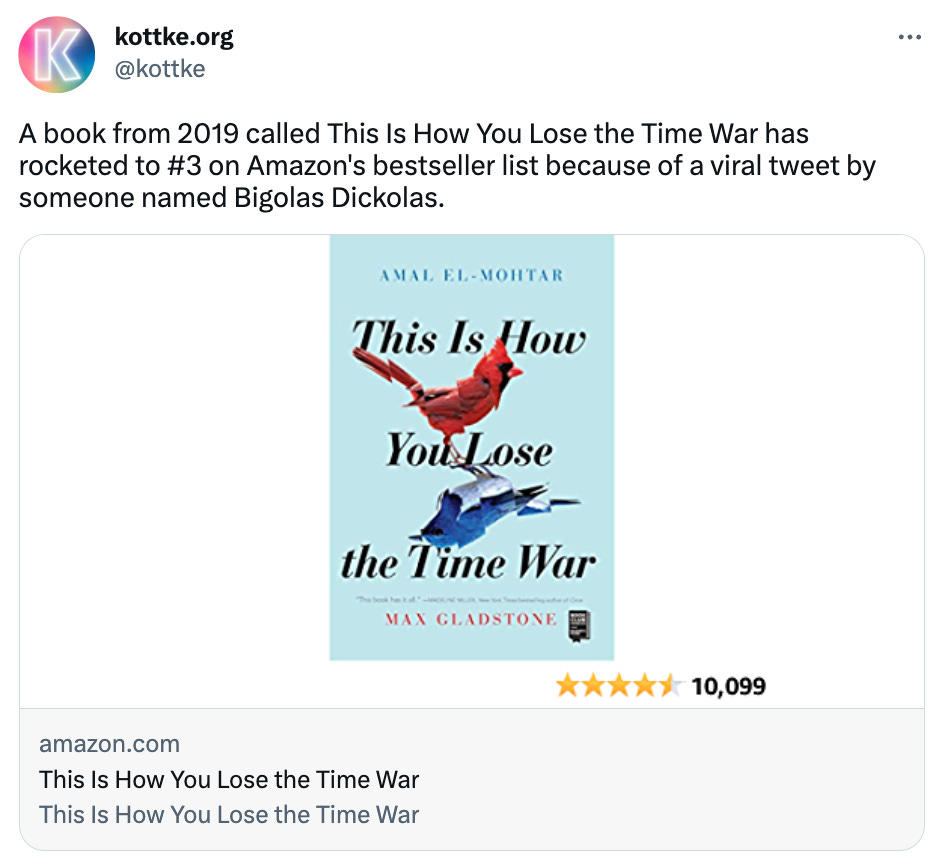

Here’s the tweet. Clearly, going viral on Twitter still can have an affect on the world.